Human-in-the-loop Done Right

"Human-native" agentic development from first principles

May 5, 2026

Some resist the adoption of agentic development, citing the need to retain visibilty over critical business logic;

Others call for a fully agentic development workflow, downplaying the operational risks it introduces to teams not equipped to deal with the onsalught of slop.

At Bicameral, we are interested in exploring the optimal surface for teams looking to engage with agentic development in production environments, that does not require them to sacrifice either speed or control.

In this article, we summarize how we came to the conlusion that the highest-leverage solution to this problem, moreso than adopting some specialized harness or agent orchestration, is through tackling the handoff friction between humans.

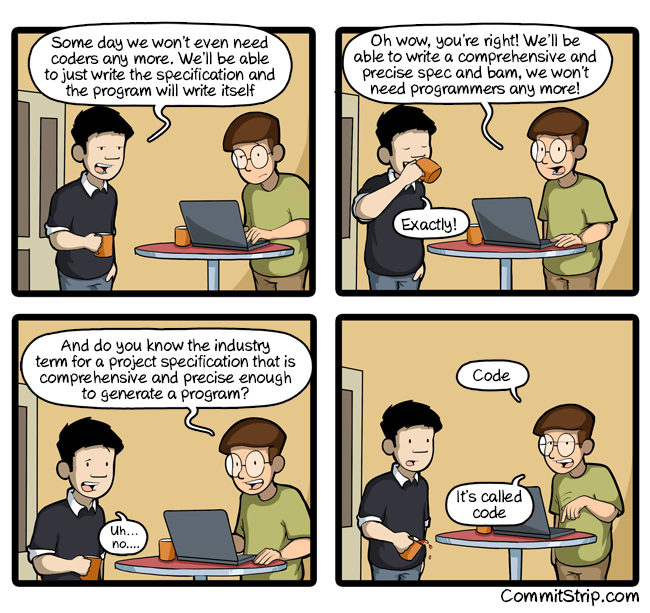

A sufficiently detailed spec is code

In our previous piece Why We Bet on Humans, we highlighted that the essential complexity of software development lies in the fact that it is unbounded problem.

Software engineers, unlike chemists, physicists, or even hardware engineers, are forced to conform to a complex environment dictated by laws of physics, by social conventions, by users’ expectations, and by other software.

— Fred Brooks, No Silver Bullet — Essence and Accidents of Software Engineering (1986)

Brooks, of the “Mythical Man-Month” fame, cited that previous advancements such as object-oriented programming or time-sharing sought to bring about paradigm shift in software development, but when all is said and done they only esulted in marginal gains in overall productivity.

Brooks reasoned that this is because those advancements tackled the accidental complexity of writing code, not the essential complexity of deciding what to code in the first place. The core challenge, in other words, lies in the design and specification of the software — an activity that involves aligning on loosely-defined tradeoffs against arbitrary external constraints, for an ever-changing system.

How does this hold up in the age of AI? The problem of specification has certainly received its fair share of attention recently, from ChatPRD’s gap detection offering to gstack’s opinionated pushbacks.

Although they do not explicitly make this claim, the premise of these solutions is that prompting AI to surface pushback is sufficient in closing specification gaps for most practical purposes.

Let us zoom in a little bit to examine whether Brook’s argument had somehow been rendered obsolete by LLMs.

In “A sufficiently detailed spec is code”, Gabriella Gonzalez makes the incisive claim that the concept of software specification is commonly misunderstood in two important ways: 1. that it is simpler than writing code and 2. that it is simultaneous a more thoughtful (read: creative) activity.

Referencing Dijkstra’s 1979 essay on the foolishness of “natural language programming”, Gonzalez argues that formal notation evolved precisely because natural language is too ambiguous to carry the precision a working system requires.

A document loose enough to read like “product writing” is a document that has not absorbed the precision the agent will need at the moment of code generation. Either the gaps surface as ambiguity in the output, or the agent quietly fills them with whatever its training distribution suggests. Changing the interface from Python to English doesn’t eliminate the underlying labor, it only disguises where the labor lives.

There is no world where you input a document lacking clarity and detail and get a coding agent to reliably fill in that missing clarity and detail.

— Gabriella Gonzalez, “A sufficiently detailed spec is code” (2026)

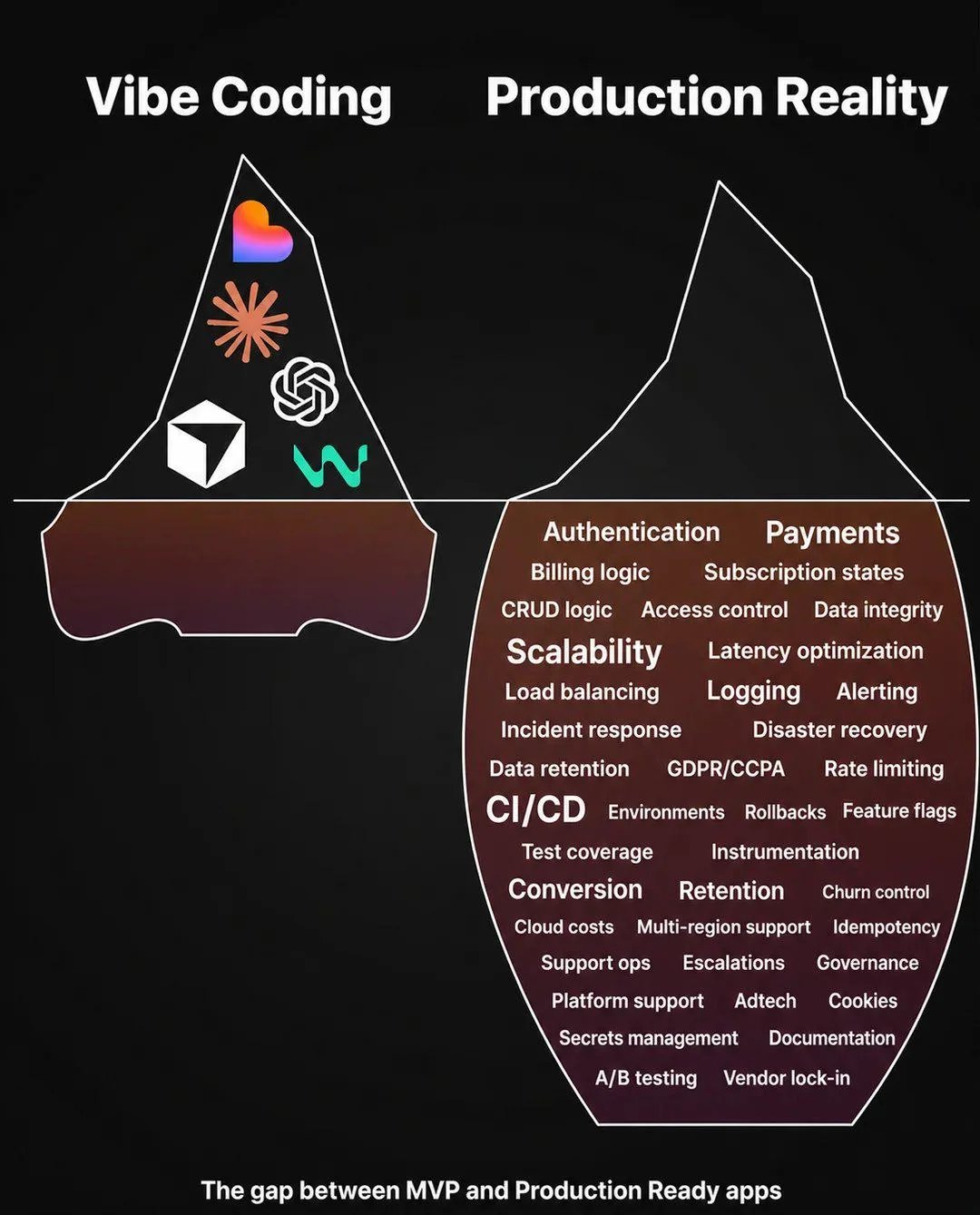

The cases where agentic coding genuinely works — landing pages, CRUD apps, dropshipping sites — are attainable because they can rely on thousand similar projects in its training data, not because specification has inherently become easier.

This is not something to trivialize for sure - your lawyer, technician, can now 10x their operation with vibecoded CRMs. These are all positive news.

This however does not apply for the majority of cases where creating custom software informed by specific busines requirements is the core task. In these cases, AI accelerated the writing part of coding, not the thinking part developers had always been doing this whole time.

Where AI fails us is when we build new software to improve the business. The tasks are never really well defined. Sometimes developers come up with a better way to do the business process than what was planned for. AI can write the code, but it doesn’t refuse to write the code without first being told why it wouldn’t be a better idea to do X first. — Quothling

Every engineering triage that deviates from the PRD to unblock usability, every commitment to service architecture that unlocks future development is product thinking thinly veiled as code, and undermining this fact merely descends into firefighting.

It perhaps isn’t surprising that only 15% of AI decision-makers report that their AI initiatives have produced a positive impact on earnings (EBITDA lift) in the past 12 months. The benefactor from this vibecoding boom has been in a minority of industries where the accidental complexity of writing code is the chief technical hurdle.

LLM as agent of clarity

Yet we wouldn’t be building in this space if we didn’t believe that LLMs had a real shot at tackling the essential complexity of software development.

Better yet, we believe that the only path to realizing its true transformative power is not through the Malthusian approach of substituting developers, but by doubling down on developers’ role as the safekeepers of software integrity.

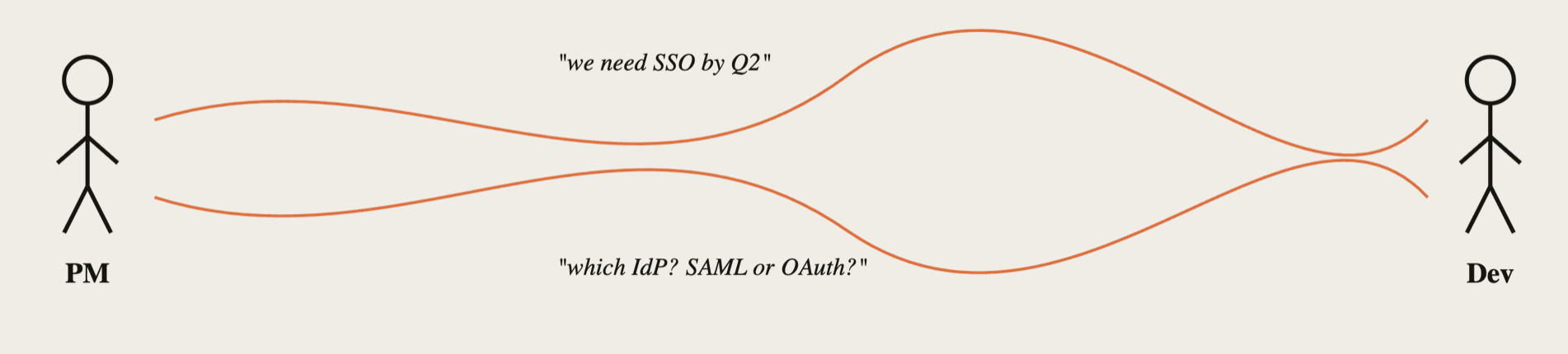

As we explored in our previous post, communication friction lies at the root. Framed in terms of what we discussed above, shrinking the accidental complexity of writing code has only made the difficulties arising from essential complexity---agreeing on what to build--- more urgent and acute.

Consider how AI is integrated into software development right now.

- Humans are asked to review thousands of lines of generated code where the cognitive load involved results in rubber-stamp approval

- AI context layers are opaque, requiring users to place trust in their long-running reliability

- Vibecoded apps optimize for functional visibility (what can be demoed) at the expense of non-functional integrity (security, maintainability, performance).

These are diametrically opposed to the developer’s role to bring structure and decrease ambiguity in a software system. It is taking the already complex task of making the right tradeoffs for an evolving product and injecting more entropy into the mix.

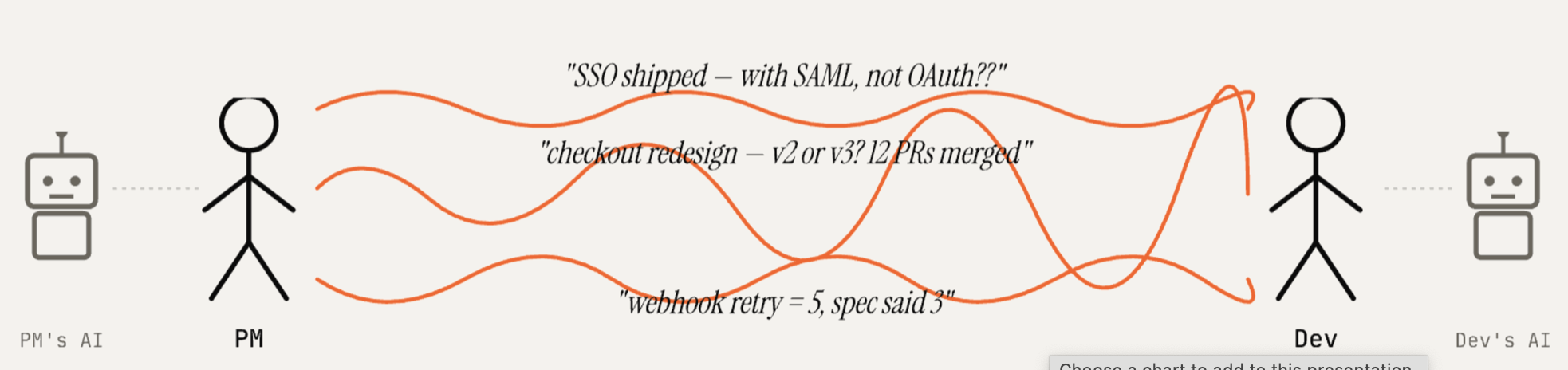

We thus take the contrarian bet: given that LLMs are functionally translators, not just syntactically between languages but also semantically across domains, we apply it instead to address the cross-functional communication gap head on.

Rather than assuming that a complete specification exists ahead of time (rarely the case) that can be fed into agents for autonomous software development, we aim instead to assist teams in making informed decisions at every juncture along the developmental lifecycle, and to do so at greater velocity.

We envision a future paradigm where each role on a software team is given the means to improve the aspect that they care about, with product owning the functional aspects (features, workflows etc.) and engineering non-functional (security, maintability, performance), ensuring that the complete user experience is accounted for.

With that as the north star dynamic, our job is to facilitate a two-way flow of information:

- Tracking product context so that developers can make good non-functional decisions

- Translating architectural implications so that product managers can make good feature prioritization decisions

Human-in-the-loop done right should result in expertise on the team compounding rather than diminishing. A product manager given visibility into architectural tradeoffs will develop the intuition to make better tactical choices over time; a developer who is kept in the loop on product evolution can make progressively better architectural choices.

This, we believe, is the way to realize the transformative power of LLMs in tackling the essential complexity of software development.

The Decision Machine

Our product roadmap begins with tracking the full lifecycle smallest unit of specification: an implementation decision.

This is the spine of Bicameral:

- Decisions as first-class entities — each one linked to source text (PRD, transcript) and grounded in code

- Two-sided ledger — team decisions injected as context to guide agentic development, implicit architectural decisions made by agents tracked for later human review

- Escalation over recommendation — flagging consequential decisions that requires cross-functional negotiation rather than making black-box suggestions

We’ve released the first version as an open-source MCP server. It works with Claude today, with support for other coding agents rolling out next. Try it →

References

- Fred Brooks, No Silver Bullet — Essence and Accidents of Software Engineering (1986).

- Gabriella Gonzalez, “A sufficiently detailed spec is code” (2026).

- Edsger W. Dijkstra, “On the foolishness of ‘natural language programming’” (1979).

- Forrester, “Predictions 2026: AI moves from hype to hard hat work” (2026).

- Bicameral AI, Why We Bet on Humans.